Designers, makers, and others often use 3D printing to rapidly prototype a spread of functional objects, from movie props to medical devices. Accurate print previews are essential so users know a fabricated object will perform as expected.

But previews generated by most 3D-printing software deal with function fairly than aesthetics. A printed object may find yourself with a distinct color, texture, or shading than the user expected, leading to multiple reprints that waste time, effort, and material.

To assist users envision how a fabricated object will look, researchers from MIT and elsewhere developed an easy-to-use preview tool that puts appearance first.

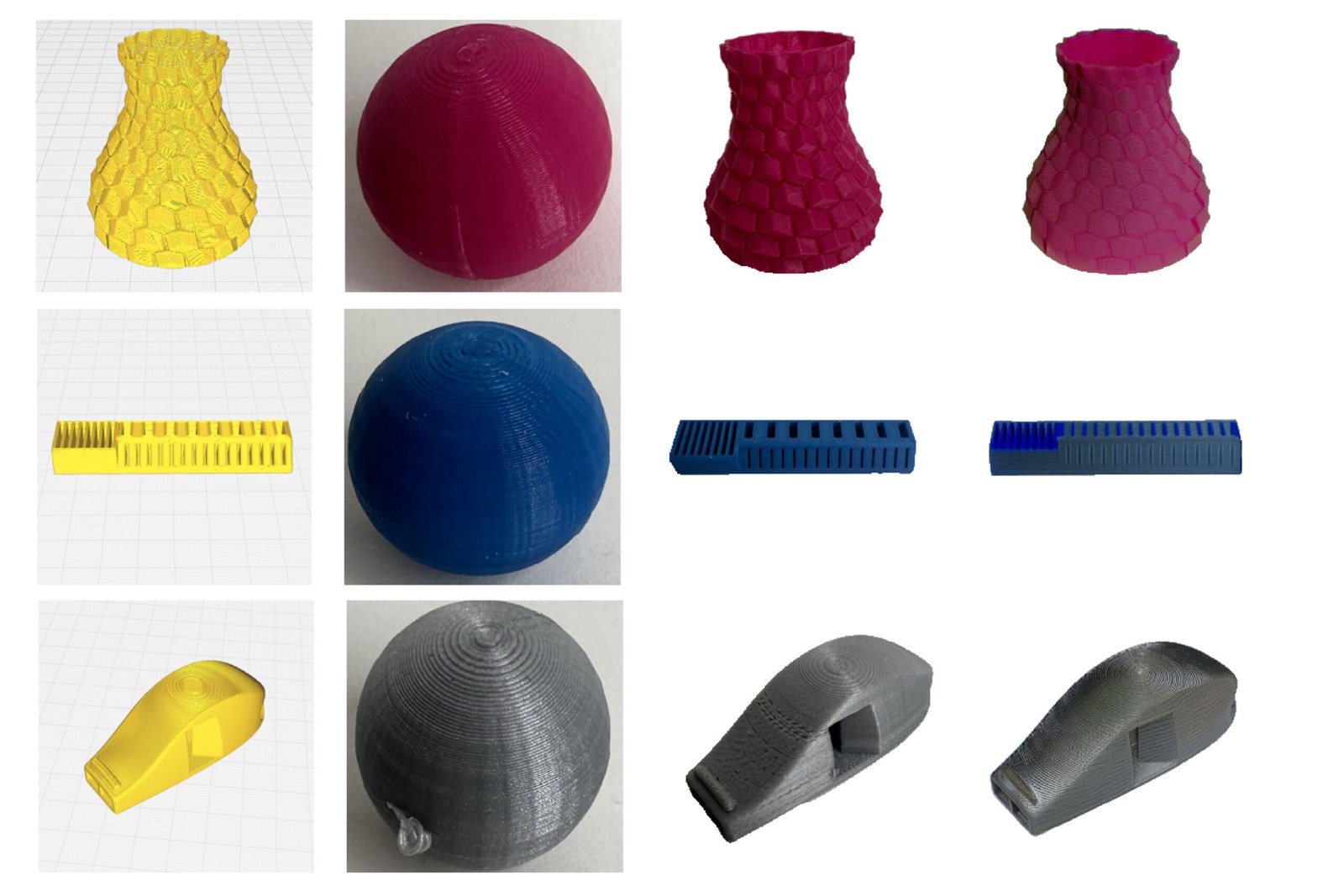

Users upload a screenshot of the thing from their 3D-printing software, together with a single image of the print material. From these inputs, the system routinely generates a rendering of how the fabricated object is more likely to look.

The substitute intelligence-powered system, called VisiPrint, is designed to work with a spread of 3D-printing software and might handle any material example. It considers not only the colour of the fabric, but additionally gloss, translucency, and the way nuances of the fabrication process affect the thing’s appearance.

Such aesthetics-focused previews may very well be especially useful in areas like dentistry, by helping clinicians ensure temporary crowns and bridges match the looks of a patient’s teeth, or in architecture, to help designers in assessing the visual impact of models.

“3D printing could be a very wasteful process. Some studies estimate that as much as a 3rd of the fabric used goes straight to the landfill, often from prototypes the user ends of discarding. To make 3D printing more sustainable, we would like to cut back the variety of tries it takes to get the prototype you wish. The user shouldn’t should check out every printing material they’ve before they choose a design,” says Maxine Perroni-Scharf, an electrical engineering and computer science (EECS) graduate student and lead creator of a paper on VisiPrint.

She is joined on the paper by Faraz Faruqi, a fellow EECS graduate student; Raul Hernandez, an MIT undergraduate; SooYeon Ahn, a graduate student on the Gwangju Institute of Science and Technology; Szymon Rusinkiewicz, a professor of computer science at Princeton University; William Freeman, the Thomas and Gerd Perkins Professor of EECS at MIT and a member of the Computer Science and Artificial Intelligence Laboratory (CSAIL); and senior creator Stefanie Mueller, an associate professor of EECS and Mechanical Engineering at MIT, and a member of CSAIL. The research might be presented on the ACM CHI Conference on Human Aspects in Computing Systems.

Accurate aesthetics

The researchers focused on fused deposition modeling (FDM), essentially the most common sort of 3D printing. In FDM, print material filament is melted after which squirted through a nozzle to fabricate an object one layer at a time.

Generating accurate aesthetic previews is difficult since the melting and extrusion process can change the looks of a fabric, as can the peak of every deposited layer and the trail the nozzle follows during fabrication.

VisiPrint uses two AI models that work together to beat those challenges.

The VisiPrint preview relies on two inputs: a screenshot of the digital design from a user’s 3D-printing software (called “slicer” software), and a picture of the print material, which might be taken from a web-based source or captured from a printed sample.

From these inputs, a pc vision model extracts features from the fabric sample which can be vital for the thing’s appearance.

It feeds those features to a generative AI model that computes the geometry and structure of the thing, while incorporating the so-called “slicing” pattern the nozzle will follow because it extrudes each layer.

The important thing to the researchers’ approach is a special conditioning method. This involves rigorously adjusting the inner workings of the model to guide it, so it follows the slicing pattern and obeys the constraints of the 3D-printing process.

Their conditioning method utilizes a depth map that preserves the form and shading of the thing, together with a map of the sides that reflects the inner contours and structural boundaries.

“If you happen to don’t have the best balance of those two things, you can use up with bad geometry or an incorrect slicing pattern. We needed to be careful to mix them in the best way,” Perroni-Scharf says.

A user-focused system

The team also produced an easy-to-use interface where one can upload the required images and evaluate the preview.

The VisiPrint interface enables more advanced makers to regulate multiple settings, similar to the influence of certain colours on the ultimate appearance.

In the long run, the aesthetic preview is meant to enrich the functional preview generated by slicer software, since VisiPrint doesn’t estimate printability, mechanical feasibility, or likelihood of failure.

To judge VisiPrint, the researchers conducted a user study that asked participants to match the system to other approaches. Nearly all participants said it provided higher overall appearance in addition to more textural similarity with printed objects.

As well as, the VisiPrint preview process took a few minute on average, which was greater than twice as fast as any competing method.

“VisiPrint really shined compared to other AI interfaces. If you happen to give a more general AI model the identical screenshots, it would randomly change the form or use the mistaken slicing pattern since it had no direct conditioning,” she says.

In the longer term, the researchers want to deal with artifacts that may occur when model previews have extremely tremendous details. In addition they need to add features that allow users to optimize parts of the printing process beyond color of the fabric.

“It is crucial to think concerning the way that we fabricate objects. We want to proceed striving to develop methods that reduce waste. To that end, this marriage of AI with the physical making process is an exciting area of future work,” Perroni-Scharf says.

“‘What you see is what you get’ has been the most important thing that made desktop publishing ‘occur’ within the Eighties, because it allowed users to get what they wanted at first try. It’s time to get WYSIWYG for 3D printing as well. VisiPrint is an incredible step on this direction,” says Patrick Baudisch, a professor of computer science on the Hasso Plattner Institute, who was not involved with this work.

This research was funded, partly, by an MIT Morningside Academy for Design Fellowship and an MIT MathWorks Fellowship.