Artificial intelligence is already proving it may well speed up drug development and improve our understanding of disease. But to show AI into novel treatments we want to get the most recent, strongest models into the hands of scientists.

The issue is that almost all scientists aren’t machine-learning experts. Now the corporate OpenProtein.AI helps scientists stay on the innovative of AI with a no-code platform that provides them access to powerful foundation models and a collection of tools for designing proteins, predicting protein structure and performance, and training models.

The corporate, founded by Tristan Bepler PhD ’20 and former MIT associate professor Tim Lu PhD ’07, is already equipping researchers in pharmaceutical and biotech firms of all sizes with its tools, including internally developed foundation models for protein engineering. OpenProtein.AI also offers its platform to scientists in academia at no cost.

“It’s a very exciting time right away because these models cannot only make protein engineering more efficient — which shortens development cycles for therapeutics and industrial uses — they may also enhance our ability to design latest proteins with specific traits,” Bepler says. “We’re also excited about applying these approaches to non-protein modalities. The massive picture is we’re making a language for describing biological systems.”

Advancing biology with AI

Bepler got here to MIT in 2014 as a part of the Computational and Systems Biology PhD Program, studying under Bonnie Berger, MIT’s Simons Professor of Applied Mathematics. It was there that he realized how little we understand in regards to the molecules that make up the constructing blocks of biology.

“We hadn’t characterised biomolecules and proteins well enough to create good predictive models of what, say, a complete genome circuit will do, or how a protein interaction network will behave,” Bepler recalls. “It got me curious about understanding proteins at a more fine-grained level.”

Bepler began exploring ways to predict the chains of amino acids that make up proteins by analyzing evolutionary data. This was before Google released AlphaFold, a strong prediction model for protein structure. The work led to one in every of the primary generative AI models for understanding and designing proteins — what the team calls a protein language model.

“I used to be really excited in regards to the classical framework of proteins and the relationships between their sequence, structure, and performance. We don’t understand those links well,” Bepler says. “So how could we use these foundation models to skip the ‘structure’ component and go straight from sequence to operate?”

After earning his PhD in 2020, Bepler entered Lu’s lab in MIT’s Department of Biological Engineering as a postdoc.

“This was across the time when the thought of integrating AI with biology was starting to choose up,” Lu recalls. “Tristan helped us construct higher computational models for biologic design. We also realized there’s a disconnect between probably the most cutting-edge tools available and the biologists, who would love to make use of these items but don’t know the best way to code. OpenProtein got here from the thought of broadening access to those tools.”

Bepler had worked on the forefront of AI as a part of his PhD. He knew the technology could help scientists speed up their work.

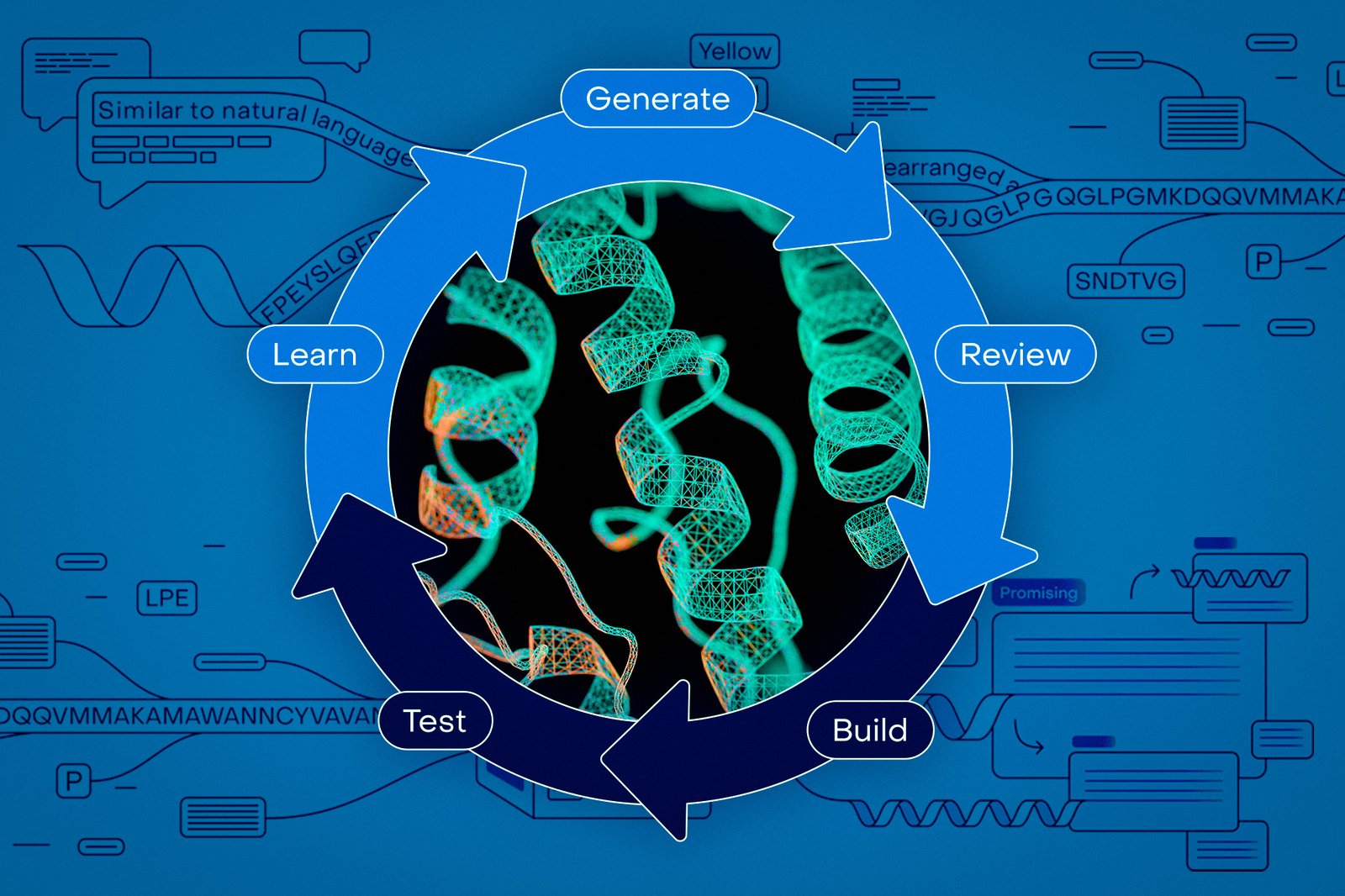

“We began with the thought to construct a general-purpose platform for doing machine learning-in-the-loop protein engineering,” Bepler says. “We wanted to construct something that was user friendly because machine-learning ideas are form of esoteric. They require implementation, GPUs, fine-tuning, designing libraries of sequences. Especially at the moment, it was quite a bit for biologists to learn.”

OpenProtein’s platform, in contrast, features an intuitive web interface for biologists to upload data and conduct protein engineering work with machine learning. It incorporates a range of open-source models, including PoET, OpenProtein’s flagship protein language model.

PoET, short for Protein Evolutionary Transformer, was trained on protein groups to generate sets of related proteins. Bepler and his collaborators showed it could generalize about evolutionary constraints on proteins and incorporate latest information on protein sequences without retraining, allowing other researchers so as to add experimental data to enhance the model.

“Researchers can use their very own data to coach models and optimize protein sequences, after which they’ll use our other tools to investigate those proteins,” Bepler says. “Persons are generating libraries of protein sequences in silico [on computers] after which running them through predictive models to get validation and structural predictors. It’s mainly a no-code front-end, but we even have APIs for individuals who wish to access it with code.”

The models help researchers design proteins faster, then determine which of them are promising enough for further lab testing. Researchers may also input proteins of interest, and the models can generate latest ones with similar properties.

Since its founding, OpenProtein’s team has continued so as to add tools to its platform for researchers no matter their lab size or resources.

“We’ve tried really hard to make the platform an open-ended toolbox,” Bepler says. “It has specific workflows, nevertheless it’s not tied specifically to 1 protein function or class of proteins. Considered one of the nice things about these models is that they are superb at understanding proteins broadly. They learn in regards to the whole space of possible proteins.”

Enabling the subsequent generation of therapies

The massive pharmaceutical company Boehringer Ingelheim began using OpenProtein’s platform in early 2025. Recently, the businesses announced an expanded collaboration that can see OpenProtein’s platform and models embedded into Boehringer Ingelheim’s work because it engineers proteins to treat diseases like cancer and autoimmune or inflammatory conditions.

Last yr, OpenProtein also released a new edition of its protein language model, PoET-2, that outperforms much larger models while using a small fraction of the computing resources and experimental data.

“We really need to resolve the query of how we describe proteins,” Bepler says. “What’s the meaningful, domain-specific language of protein constraints we use as we generate them? How can we herald more evolutionary constraints? How can we describe an enzymatic response a protein carries out such that a model can generate sequences to try this response?”

Moving forward, the founders are hoping to make models that consider the changing, interconnected nature of protein function.

“The world I’m enthusiastic about goes beyond protein binding events to make use of these models to predict and design dynamic features, where the protein has to have interaction two, three, or 4 biological mechanisms at the identical time, or change its function after binding,” says Lu, who currently serves in an advisory role for the corporate.

As progress in AI races forward, OpenProtein continues to see its mission as giving scientists one of the best tools to develop latest treatments faster.

“As work gets more complex, with approaches incorporating things like protein logic and dynamic therapies, the present experimental toolsets grow to be limiting,” Lu says. “It’s really vital to create open ecosystems around AI and biology. There’s a risk that AI resources could get so concentrated that the common researcher can’t use them. Open access is super vital for the scientific field to make progress.”